The solution ideas we will present are not new to numerical analysis. Furthermore, they were also implemented in a different fashion for Disney’ snow solver: Gast et al. [2015]. We adapted them to SPH and improved upon them using our expertise.

A fact we deliberately left out for now is: Where does an iterative method start? From the visualization it becomes apparent that the closer a guess vG is already to the solution vt+Δ, the less iterations we need. If someone would give you a list of “random” linear systems to solve, your starting point would be as random because you have no knowledge about the system. However, physics is more predictable.

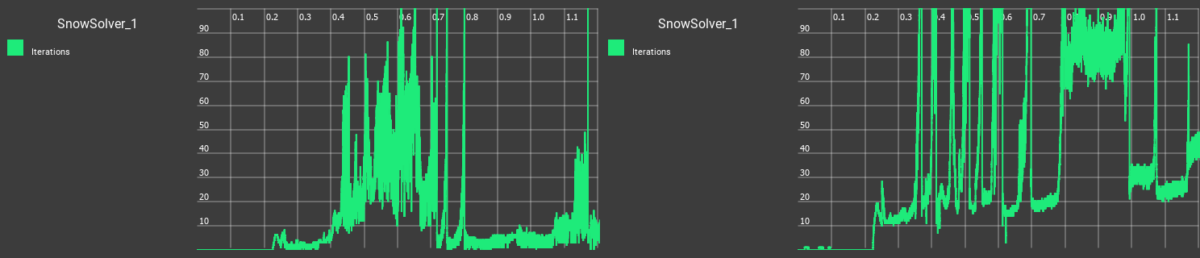

The guess that was used prior to PreonLab 5.0 is to just start with v*. It consists of the velocity vt of the previous timestep and all already known updated accelerations like gravity and airflows. It’s a good guess when the simulation is dominated by these forces, i.e. free-fall or wind-driven snow. It is also very good at keeping a simulation static: Any noisy accelerations created by the solver get overshadowed by gravity which presses the snow towards the rigid boundary.

Another method to obtain an initial guess is mentioned in Weiler et al.[2018]. It is used for the viscosity solver in PreonLab. In the snow solver, the idea is to reuse the change in velocity the solver had determined in the last timestep Δv=vt−v*. This change in velocity is the change in elastic acceleration the solver corrected in the last timestep. This guess assumes that the correction we applied in the last timestep stays the same. This guess is very good at propagating momentum through the snow medium. It also does not push particles towards the solid boundary by default. Solid boundaries can be a hard boundary condition to satifsy and are most likely to create the jumps in iterations we saw above.

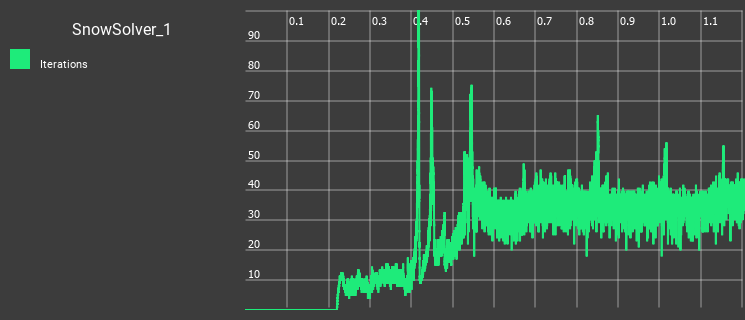

It would be nice to combine different guesses to play to their respective strengths and that’s precisely what we do. Gast et al. [2015] show that you can recast the equation solving problem (based on forces) as an optimization problem (based on energies). Energy is a scalar and should attain a minimum at the end of our solve. This makes energy a good measure to evaluate the quality of an initial guess. So we use minimization of energy as a guide to find an initial guess. Gast et al. [2015] proposes to do this decision each timestep globally for all particles. We deviate from this method and make each particle decide locally which method of initial guess is the best one for it. We do this by saying that each particle has a local energy: A particle goes through each guess and assumes all particles in its neighborhood move with the same guess. We evaluate the local energy for each guess. The particle then picks the guess with the lowest local energy. The cost of a single guess is close to half an iteration, so we can motivate doing two if we save one iteration.

How is our wedge doing with this idea?